Essay · 2026

Your AI Agent Stack Is Solving The Wrong Problem

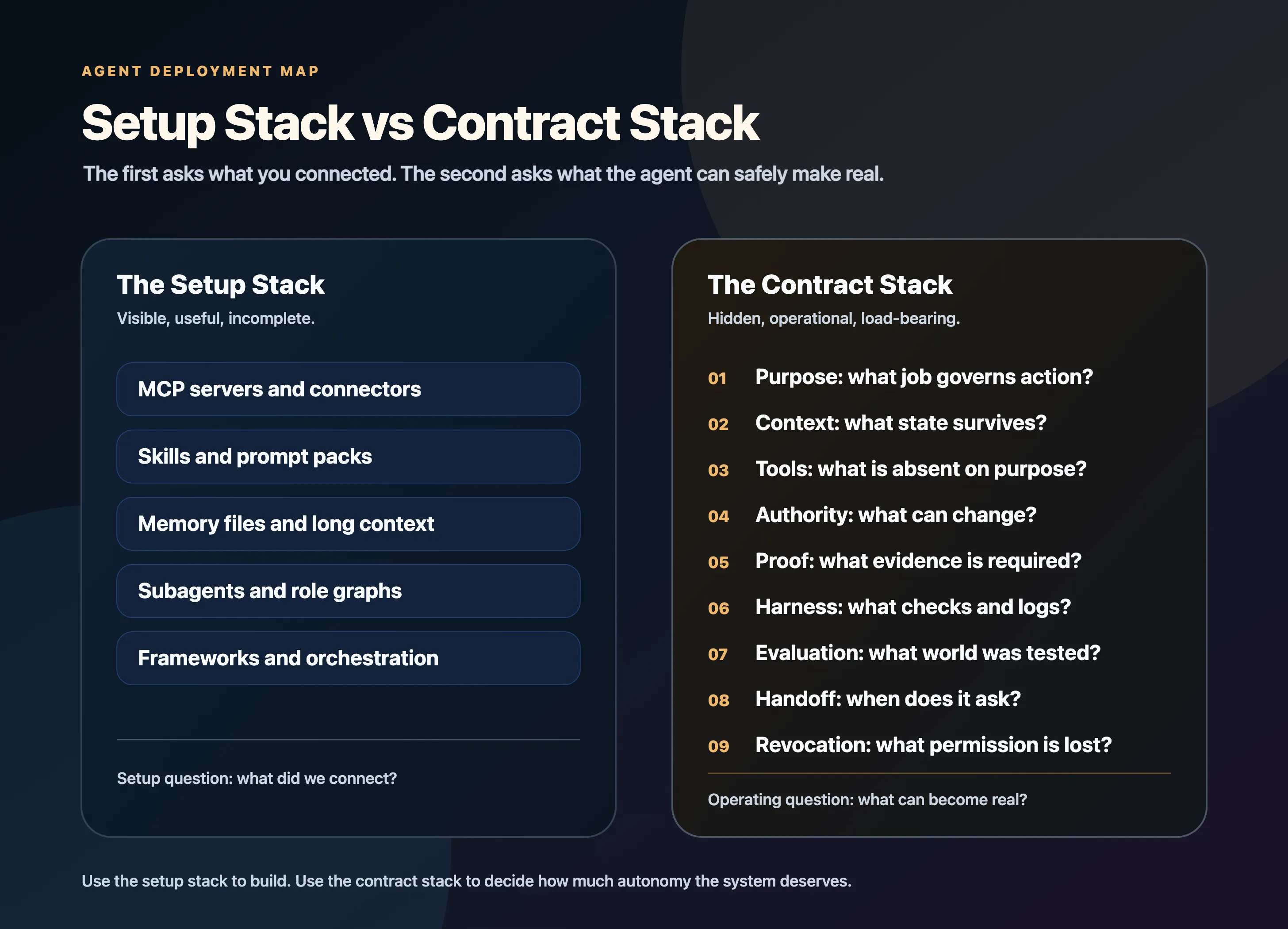

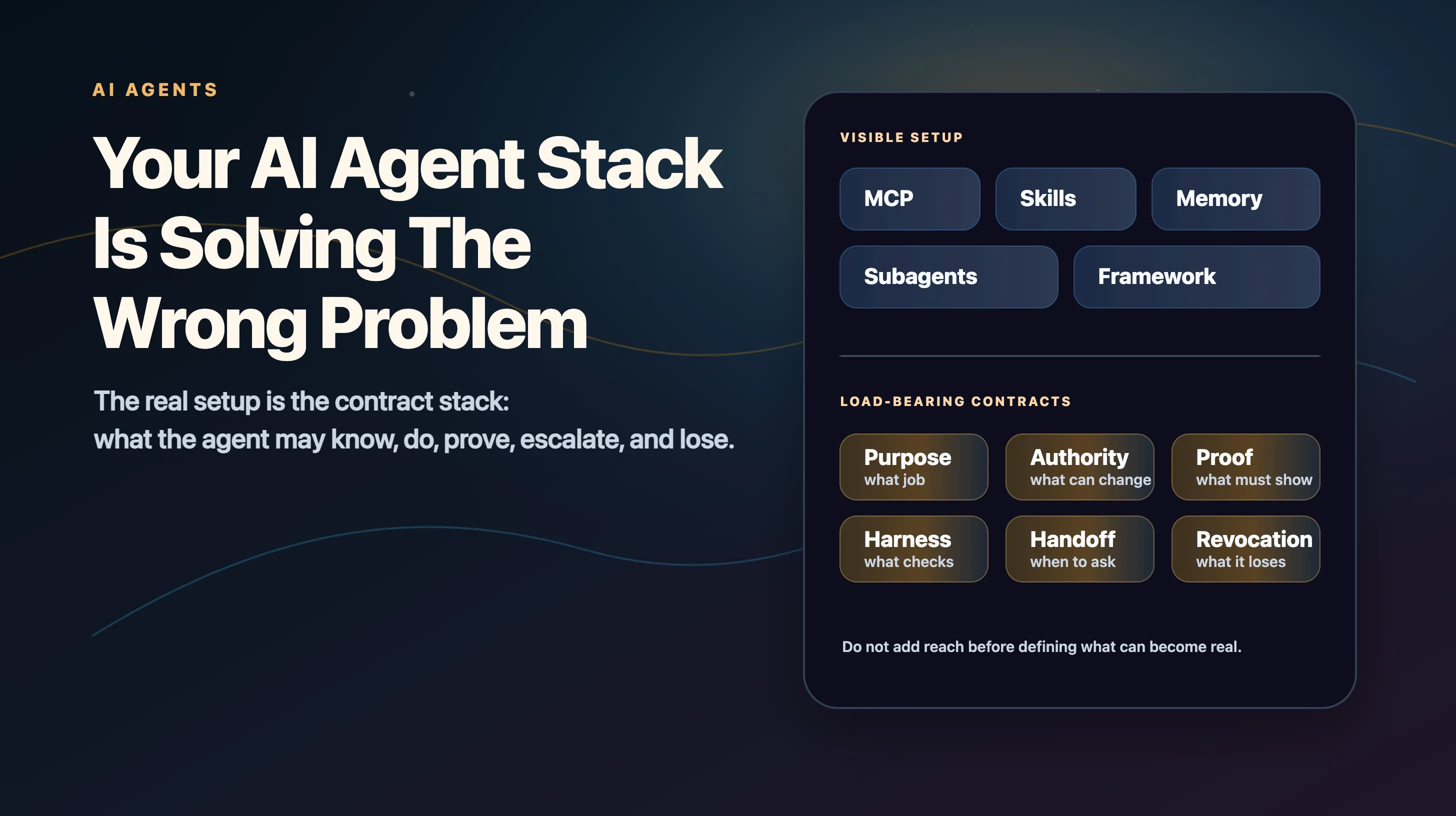

The real setup is not MCP servers, skills, memory files, and subagents. It is the contract stack that decides what an agent may know, do, prove, escalate, and lose.

The setup everyone is sharing

Which MCP servers to install. Which skills to keep in your repo. Which agent framework to use. How to write your AGENTS.md. How to split one agent into researcher, planner, coder, and reviewer. How to wire Slack, GitHub, Notion, Postgres, Stripe, your calendar, and your file system into one increasingly capable loop.

Some of that advice is useful. It is also aimed at the wrong layer.

What becomes real after the agent uses a tool matters more than whether it can reach the tool.

Can it read the customer record, or change it? Can it draft the refund, or issue it? Can it open a pull request, or merge it? Can it propose the vendor response, or send it under the company name?

Once an agent can act through tools, the real system is no longer the model.

The real system is the contract stack around the model.

That is the part most setup guides skip.

Access is reach. Agency is permissioned action.

Imagine the demo.

The agent can read Slack. It can search email. It can query the CRM. It can open GitHub issues, check billing records, browse docs, edit a spreadsheet, draft a customer reply, and call three internal APIs.

Everyone in the room calls it powerful.

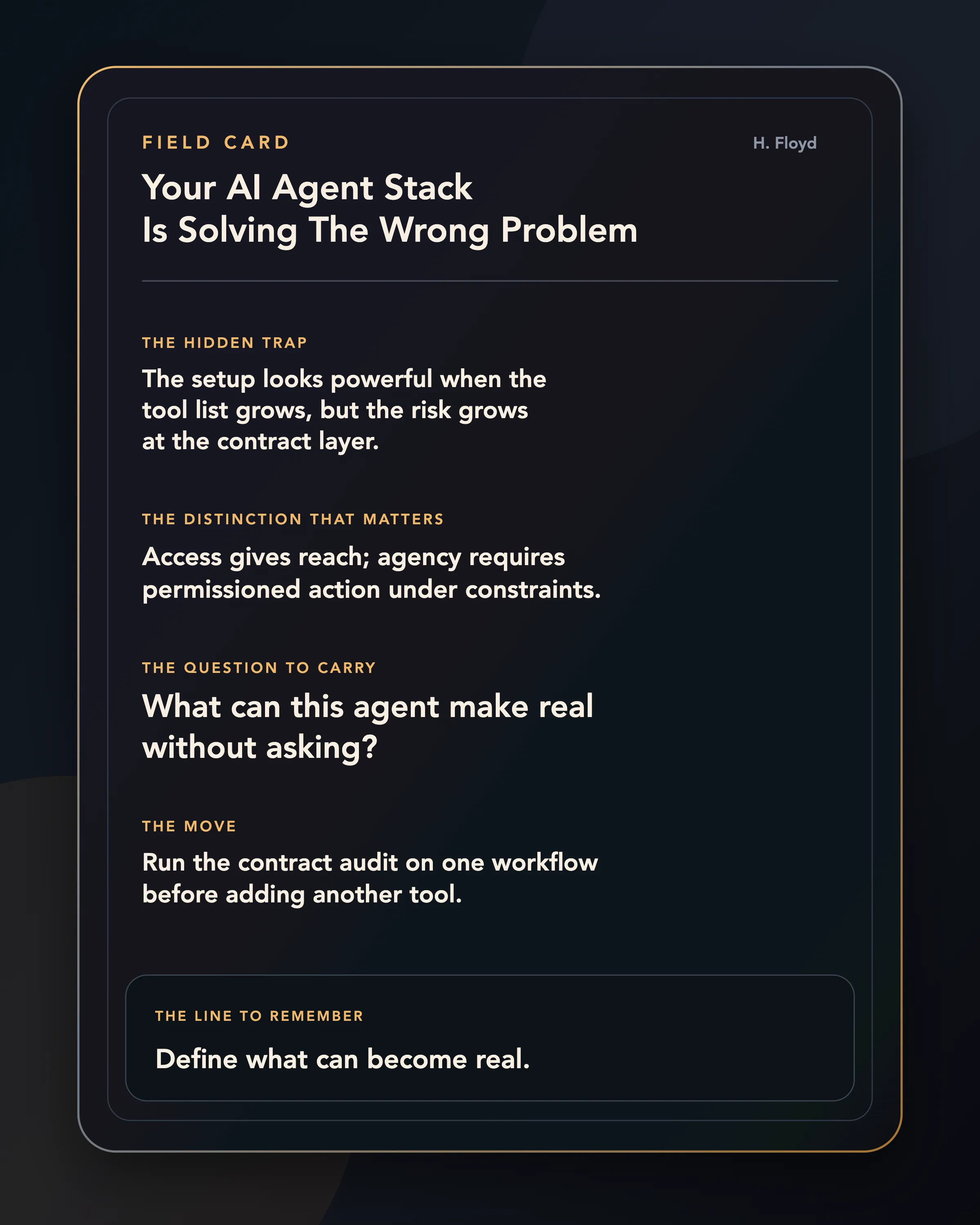

That is the first mistake.

The agent has reach. It does not yet have governed agency.

Access tells you what the agent can touch. Agency tells you what the agent is authorised to decide, under which conditions, with what proof, and with what consequence after failure.

That distinction sounds small until the first bad run.

A read-only research assistant can waste time. An agent with billing access can create obligations. An agent with email access can speak for the company. An agent with deployment access can turn a wrong inference into infrastructure.

More tools do not automatically make the agent more agentic.

More tools expand the surface on which judgement has to be engineered.

The tool stack is visible. The contract stack is load-bearing.

The visible agent stack is easy to list: model, prompt, memory, tools, MCP servers, subagents, framework, evals.

That stack matters. It is also not the operating system.

The operating system is the set of contracts each layer creates.

What is the agent for? What state may it see? What state may it preserve? Which tools may it call? Which tools are intentionally absent? What can it change? What must it prove before the change becomes binding? What does the harness log? What does the evaluation score actually cover? When does the agent ask, abstain, or escalate? What permission disappears after a bad run?

That is the real setup.

Not the list of tools.

The set of boundaries that decides what the tools mean.

The generic setup stack asks what you connected.

The contract stack asks what you can trust.

An agent is a control loop, not a prompt with ambition

An agent is an outer control loop wrapped around a generator. It plans, reads state, chooses tools, acts, observes, repairs, escalates, and decides whether to continue.

The failure rarely sits in one glamorous place.

It can sit in the planner. It can sit in retrieval. It can sit in a tool description. It can sit in retry logic. It can sit in a hidden assumption about whether the world waits while the agent thinks.

That is why framework comparisons are often less useful than they look.

The distinction that matters is which parts of the loop are explicit enough to inspect.

If planning is hidden inside one long natural-language instruction, you cannot repair planning without rewriting the whole prompt.

If memory is just a growing transcript, you cannot tell whether the agent remembered, retrieved, inferred, or hallucinated.

If tool choice is unlogged, you cannot tell whether the answer is wrong because the model reasoned badly or because it called the wrong thing.

If evaluation is one final pass/fail number, you cannot tell whether the agent failed at discovery, parameters, sequencing, recovery, escalation, or judgement.

Agents do not become reliable when the setup becomes more impressive.

They become reliable when failure has somewhere specific to land.

MCP is not magic glue

MCP matters. Skills matter. Connectors matter.

But their importance is often described backwards.

The lazy version says MCP is valuable because it gives agents more tools.

The better version says MCP is valuable because it makes the tool boundary explicit enough to inspect, version, test, authorise, and debug.

A tool is not neutral plumbing. A tool description tells a nondeterministic system what an action means. The name, parameters, return shape, error messages, and allowed mutations all change behaviour.

A tool built for a human developer is not automatically a good tool for an agent. Humans carry missing context. Agents need the contract written down.

That is why more tools can make an agent worse.

At small scale, tool access feels like freedom. At larger scale, tool access becomes search. The agent has to identify the right tool, pass valid parameters, recover from partial failure, and avoid inventing a successful trace when the tool call failed.

If you expose every API endpoint as a tool, you do not have a powerful agent surface.

You have a vocabulary problem with write access.

The mature move is not “connect everything.”

The mature move is to design the smallest tool surface that lets the agent do the job, then make every tool contract legible. What does the tool do. When should it be used. What the return value proves, and what it does not prove. What failures look like. Which calls are read-only, which mutate state, which require approval. Where the trace goes.

That is how you stop a transcript from becoming the only place your operating system exists.

Skills are not prompt snippets

The same mistake happens with skills.

People treat skills as better prompts: a SKILL.md, a few examples, some instructions, maybe a script. Useful. Portable. Easy to share.

But a serious skill is not a prompt snippet.

It is packaged operating knowledge.

It should contain a trigger, a procedure, a boundary, gotchas, and a failure mode.

The “gotchas” are usually the most valuable part. The model often already knows the happy path. What it does not know is your local scar tissue: which API lies, which file must not be edited, which naming convention breaks deployment, which customer segment changes the policy.

That is why generic skill catalogues have a ceiling.

They can teach a model the common workflow.

They cannot teach it which parts of your workflow are load-bearing unless you package that knowledge yourself.

Skills are valuable because they let operational knowledge travel across sessions and agents. They are dangerous when they activate at the wrong time, compose implicitly into deeper graphs nobody intended, or grant state-changing behaviour without a permission contract.

The real question is sharper:

When this skill activates, what decision is it allowed to influence?

If nobody can answer that, the skill is just a more durable way to make the wrong move.

Memory is governed state, not a bigger past

Memory has the same problem.

Every agent product wants to promise memory. It sounds obvious. The agent should remember the user, the project, the codebase, the customer history, the prior decision, the mistake from last time.

But memory is not “more context.”

Memory is a four-part contract: what gets written, how it is organised, how it is retrieved, how it is governed.

If the agent writes too much, memory becomes sludge.

If it summarises badly, memory becomes distortion.

If it retrieves by similarity alone, memory becomes vibes with citations.

If it never forgets, memory becomes context poisoning.

If it cannot show why a memory was used, memory becomes an invisible authority.

The memory question worth asking:

Which state should survive because it will improve future decisions, and which state should expire because it will poison them?

That is a contract question.

It is also why a 500-word, well-maintained project note can outperform a giant chat history. The smaller note has a job. The transcript merely has volume.

The real setup is the contract stack that decides what an agent may know, do, prove, escalate, and lose.

The harness is where autonomy becomes measurable

Most agent demos make the model look like the protagonist.

In production, the harness is the protagonist.

The harness is everything that surrounds the weights: task boundaries, tools, retry budgets, permission gates, stop rules, and evidence artefacts.

Change the harness and the same model can look like a different system.

That should make us suspicious of agent benchmarks that treat the model as the only object being compared. A published score does not measure a disembodied model. It measures a deployment regime, scaffold, metric, and judge.

Was the world static or changing? Did the agent see a screenshot, HTML, an accessibility tree, a database row, or a curated prompt? How many retries did it get? Did it have tools? Which ones? Was the grader human, model-based, rubric-based, trajectory-aware, or outcome-only? Did the metric reward one lucky success or repeated consistency?

These are not footnotes.

They are the contract.

If your eval sits two regimes below deployment, treat it as lab evidence.

If it tests read-only draft behaviour, do not use it to justify automatic writes.

If it rewards pass@k, do not pretend it proves worst-run reliability.

If it grades only final answers, do not pretend it inspected tool behaviour.

If it hides traces, do not pretend it supports auditability.

The score is not the contract.

The score is one output of a contract you have to name.

The dangerous middle

There is a tempting objection here.

If every action needs a contract, won’t we kill the point of agents?

Yes, if we do it badly.

The answer is not to wrap every agent in a permission wall so thick it becomes useless.

The answer is to match authority to consequence.

Read-only search should be cheap. Drafting should be cheap. Local reversible edits should be cheaper than external irreversible commitments. Actions that affect money, identity, infrastructure, or customer communication should pass through stronger gates.

Good agent authority is not one wall.

It is a slope.

The agent gets wider freedom where mistakes are cheap, visible, and reversible. It gets narrower freedom where mistakes are expensive, silent, and hard to undo.

Older institutions already know this.

A junior analyst can see a model. They cannot approve the trade.

A support rep can view a customer record. They cannot issue a large refund without approval.

An engineer can open a pull request. They cannot deploy to production alone.

A finance employee can prepare a payment. They cannot release it without a second sign-off.

Organisations separate seeing, recommending, approving, executing, logging, and reviewing because authority is not a binary.

Agents force software teams to rediscover that inside product architecture.

The missing layer is revocation

Most agent setups have a permission story.

Few have a revocation story.

That is the giveaway.

A human loses trust after a bad judgement. They may lose budget authority, approval rights, admin permissions, unsupervised access, or the ability to act without review.

Most agents fail, get patched, and return with the same action surface.

That is not learning.

That is amnesia with API keys.

If a bad run does not shrink future permissions, the system has no operational immune response.

Revocation does not have to be dramatic. After one unsafe draft, require review for that category. After one wrong tool call, remove that tool until the contract is fixed. After one stale-memory error, force a memory review before reuse. After one hallucinated trace, require deterministic evidence for the next run. After one escalation miss, lower the threshold for asking a human.

That is the difference between an agent that is merely corrected and an agent system that becomes safer.

The hard part is not giving the agent a tool.

The hard part is deciding when the tool stops being available.

The Agent Contract Stack Audit

Run this on one agent workflow you are tempted to trust.

Not the whole company. Not your entire AI strategy. One agent. One workflow.

Write the answers down.

1. PURPOSE

What decision or workflow is this agent meant to govern?

2. CONTEXT

What state can it see, and what state must persist?

3. TOOLS

What can it call, and which tools are intentionally absent?

4. AUTHORITY

What can it change without approval?

5. PROOF

What evidence must it produce before action?

6. HARNESS

What retries, budgets, logs, and checks surround it?

7. EVALUATION

What regime does the score actually cover?

8. HANDOFF

When does it ask, abstain, or escalate?

9. REVOCATION

What permission disappears after a bad run?Most teams can answer tools.

Some can answer authority.

Few can answer proof, harness, evaluation regime, handoff, and revocation in the same breath.

That is the diagnostic.

If you cannot answer purpose, you have a demo.

If you cannot answer context, you have hidden state.

If you cannot answer tools, you have inventory risk.

If you cannot answer authority, you have implicit delegation.

If you cannot answer proof, you have output without evidence.

If you cannot answer harness, you have unreproducible behaviour.

If you cannot answer evaluation, you have a score without a world.

If you cannot answer handoff, you have autonomy without judgement.

If you cannot answer revocation, you have no way for failure to change the system.

You may still have a useful agent.

You do not yet have a trustworthy one.

What to build instead

Do not start by asking which agent framework to use.

Start with one sentence:

We are evaluating whether this agent can perform this action in this environment under this permission boundary, and the decision governed by the result is this.

That sentence does more work than a diagram with six logos.

Then build the contract stack around it.

Give the agent the smallest tool surface that can do the job.

Package skills for local gotchas, not generic inspiration.

Write memory only when future decisions should depend on it.

Keep the harness visible.

Evaluate in the regime you plan to deploy.

Make traces inspectable.

Define escalation before the agent is confused.

Define revocation before the agent fails.

This is less exciting than another setup guide.

It is also the part that will decide who can actually use agents.

The serious agent market will split into two groups.

One side will sell reach: more connectors, more tools, more memory, more impressive demos.

The other side will sell agency: permissioned action, bounded autonomy, proof before commitment, escalation when context breaks, traces that survive, and revocation when trust is lost.

Reach will demo better.

Agency will survive contact with the organisation.

Field Card

If a single argument here changed what you were about to trust, the highest-leverage move is to subscribe on Substack. One piece a week, no filler.