Essay · 2026

The 90-Day Canopy Audit

A roadmap can look productive while most of the work is easy to displace. Run the Substrate Map on the last 90 days and force the next planning decision to change.

Most roadmap reviews reward completion. The better review asks which completions will survive the next change.

The meeting everyone recognises

You are in the end-of-quarter roadmap review.

The page looks good.

Green ticks everywhere. A few screenshots. A small chart moving up and to the right. Someone says the team shipped a lot despite the chaos. Everyone half-nods because the list is long enough to feel true.

That is the trap.

This is the moment most teams stop thinking.

Not because they are lazy. Because completion is comforting. A finished roadmap gives everyone a clean story: the team worked hard, the product improved, the quarter counted.

But there is a more useful question hiding underneath the shipped list:

How much of this work will still matter after the next large change?

That is the move: stop treating the shipped list as proof, and test it against the next environment.

That is the 90-day canopy audit.

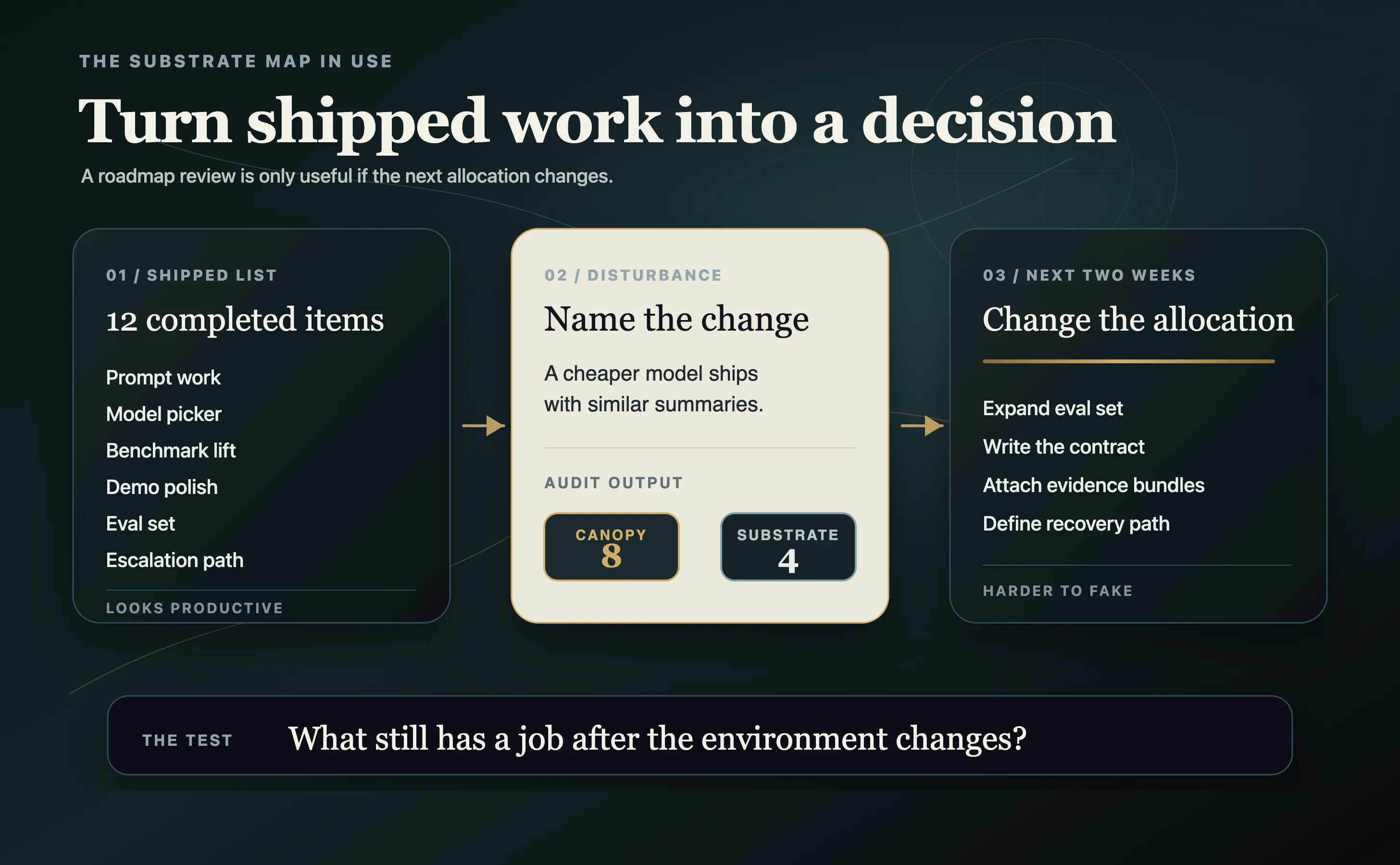

For the example below, imagine a team reviewing the last 90 days of work on an AI support product. It is a composite, not a case study. The point is the pattern.

The team shipped a new summary flow. It tuned retrieval settings. It ran a model comparison. It cleaned up prompt templates. It improved the demo. It moved from one agent framework to another. It added a model-picker interface. It lifted an internal benchmark. It also built a small eval set, documented recurring failure modes, added an escalation path for bad answers, and started recording evidence bundles for customer-visible outputs.

Twelve items shipped. Several are visible. The demo is better. The benchmark moved. The roadmap has enough completed work to make the team feel like the quarter was not wasted.

But shipped is not the same as durable.

The Substrate Map gives the review a different job.

The question is not:

What did we ship?

The question is:

What did we ship that the next change cannot easily displace?

Name the disturbance

The audit only works after you name a plausible change.

Not “AI gets better.”

Something concrete:

A cheaper model ships next quarter with support summaries that are almost as good as ours.

That one sentence changes the roadmap review.

Now every shipped item has to answer a harder question: if that model lands, does this work still have a job?

Some of it does.

Some of it does not.

The uncomfortable part is that the work people remember from the review is often the easiest to displace.

The polished demo.

The model switcher.

The benchmark lift.

The prompt library.

None of these are automatically bad. They may be needed. They may help the customer this month. They may get the product through the next sales call.

But if the surrounding environment changes, they are the first items you have to renegotiate.

The 12-item audit

Now tag the composite roadmap honestly.

The canopy side is crowded: prompt templates for the current model, RAG chunk-size tuning, a model comparison leaderboard, demo polish for the sales flow, a model-picker interface, migration to a new agent framework, benchmark tuning against the current frontier, and cleanup of the summary prompt library.

The substrate column is shorter: a golden eval set built from real failed conversations, a pinned evaluation contract that survives a model swap, a customer escalation path when the answer is wrong, and evidence bundles for customer-visible outputs.

That is eight canopy items and four substrate items.

The team shipped twelve things.

Two thirds of the quarter was canopy.

The point is not to worship the ratio. The point is to use it as a regime signal.

If the quarter is 70%+ canopy, the team is probably overfitted to the current regime. It may still be moving fast, but a model release, pricing change, customer workflow shift, or competitor feature can reset too much of the work.

If the quarter is roughly 50/50, that is normal. Most real work needs visible canopy and durable substrate. The question is whether the substrate side is becoming more explicit over time.

If the quarter is 70%+ substrate, protect it. That is usually the less glamorous work competitors do not copy from a screenshot: eval discipline, traceability, workflow depth, recovery paths, failure memory, and proprietary signal.

The trend matters more than the single number.

A canopy-heavy quarter before a launch might be fine. Three canopy-heavy quarters while the team says it is building a moat is a different diagnosis.

That does not mean two thirds of the work was stupid.

This is where the audit has to be honest or it becomes another management slogan. Canopy matters. Customers experience the canopy. Demos happen in the canopy. Interfaces, summaries, model choices, and prompt work can all be useful.

The problem is not that canopy exists.

The problem is believing a canopy-heavy quarter created durable progress.

The audit turns a shipped list into an allocation decision.

The boundary argument is the point

The most valuable part of the audit is not the final ratio.

It is the argument at the boundary.

Someone will say the model comparison leaderboard is substrate because it helps the team choose models faster. Maybe. But if the leaderboard only measures the current task mix, current prompts, current pricing, current model set, and current evaluator, it is still mostly canopy. The next release can reset the comparison.

Someone will say the agent-framework migration is substrate because the architecture is cleaner. Maybe. But if another framework becomes standard next quarter, or the model provider ships the capability directly, the migration may have been canopy with better engineering taste.

Someone will say the golden eval set is canopy because it was built for the current product. Probably not. If the examples came from real failed conversations, if they preserve the customer context, if they can be replayed against the next model, then the eval set keeps doing work after the model changes.

That is the boundary rule:

If the team cannot agree which column an item belongs in, tag it as canopy until the substrate is made explicit.

Disagreement is not noise. It is the instrument finding the hidden work.

In a real meeting, this is where the value appears.

The audit makes vague strategy concrete enough to argue with. Instead of someone saying, “This feels important,” they have to say what survives. Instead of someone saying, “The architecture is cleaner,” they have to say what the cleaner architecture keeps doing if the model, workflow, buyer, or cost curve changes.

That is why the tool is useful even when the ratio is imperfect.

It forces the team to surface the reason.

The decision changes

Before the audit, the team wants to keep going.

More prompt polish. Better model picker. Another benchmark pass. More demo flow. A cleaner interface for switching models.

After the audit, the next two weeks look different.

The team pauses the model-picker interface. It stops treating prompt-library cleanup as strategic progress. It keeps some UI work because customers need the product to be usable, but it no longer lets visible polish dominate the next planning cycle.

Instead, the team moves time into four things: expanding the eval set from 40 failed conversations to 120, writing the evaluation contract down so the score means the same thing after a model swap, attaching every customer-visible answer to a small evidence bundle, and defining the escalation path for answers with high reversibility cost.

The roadmap did not get slower.

It got harder to fake.

That is the difference between a shipping review and a substrate review. A shipping review asks whether work happened. A substrate review asks whether the work still matters after the world moves.

The important thing is that the audit changes a decision quickly.

If it only produces a prettier post-mortem, it failed.

The two-week reallocation is the point. You do not need a reorg. You do not need a new strategy process. You need one planning cycle where the next slice of work moves toward substrate before the old pattern hardens.

Run it on your own work

Open the last 90 days of your roadmap.

Do not start with the whole company. Start with one product line, one team, one portfolio, or one major bet.

You can do the first version in 20 minutes.

Name the most likely large change in the next 18 months. List the work shipped in the last 90 days. Tag each item as canopy or substrate. If the boundary is unclear, tag it as canopy until the substrate is explicit. Compute the ratio. Then change one allocation decision for the next two weeks.

Three prompts make the exercise less abstract:

If our current model advantage disappeared, which items would still matter?

If our main customer workflow changed, which items would still matter?

If a competitor copied the visible feature, which items would still matter?

The last step matters.

If the audit does not change the next allocation, it was only vocabulary.

The goal is not to call more things substrate.

The goal is to find the work that will still have a job when the environment changes, then protect it before the next quarter turns it invisible again.

A useful warning

Do not use this audit to make the team feel bad for shipping visible work.

That is the lazy version.

Use it to stop confusing visible work with compounding work.

A good quarter can contain plenty of canopy. The user still needs the product to look and feel right. The market still responds to surfaces. The interface still matters.

But if every quarter is dominated by work that has to be redone when the environment changes, you are not building durability.

You are renting momentum.

That is the shift: stop asking whether the quarter was busy. Ask how much of it still has a job after the world moves.

The free Substrate Map is here:

Run it before the next planning meeting.

Not because every roadmap should become substrate.

Because every roadmap should know how much of itself is not.

If you run the audit, which shipped item was hardest to classify? That boundary argument is usually where the real strategy work begins.

More free tools like this. Subscribe to get the next durability-lens resource the day it ships.

If a single argument here changed what you were about to trust, the highest-leverage move is to subscribe on Substack. One piece a week, no filler.