Essay · 2026

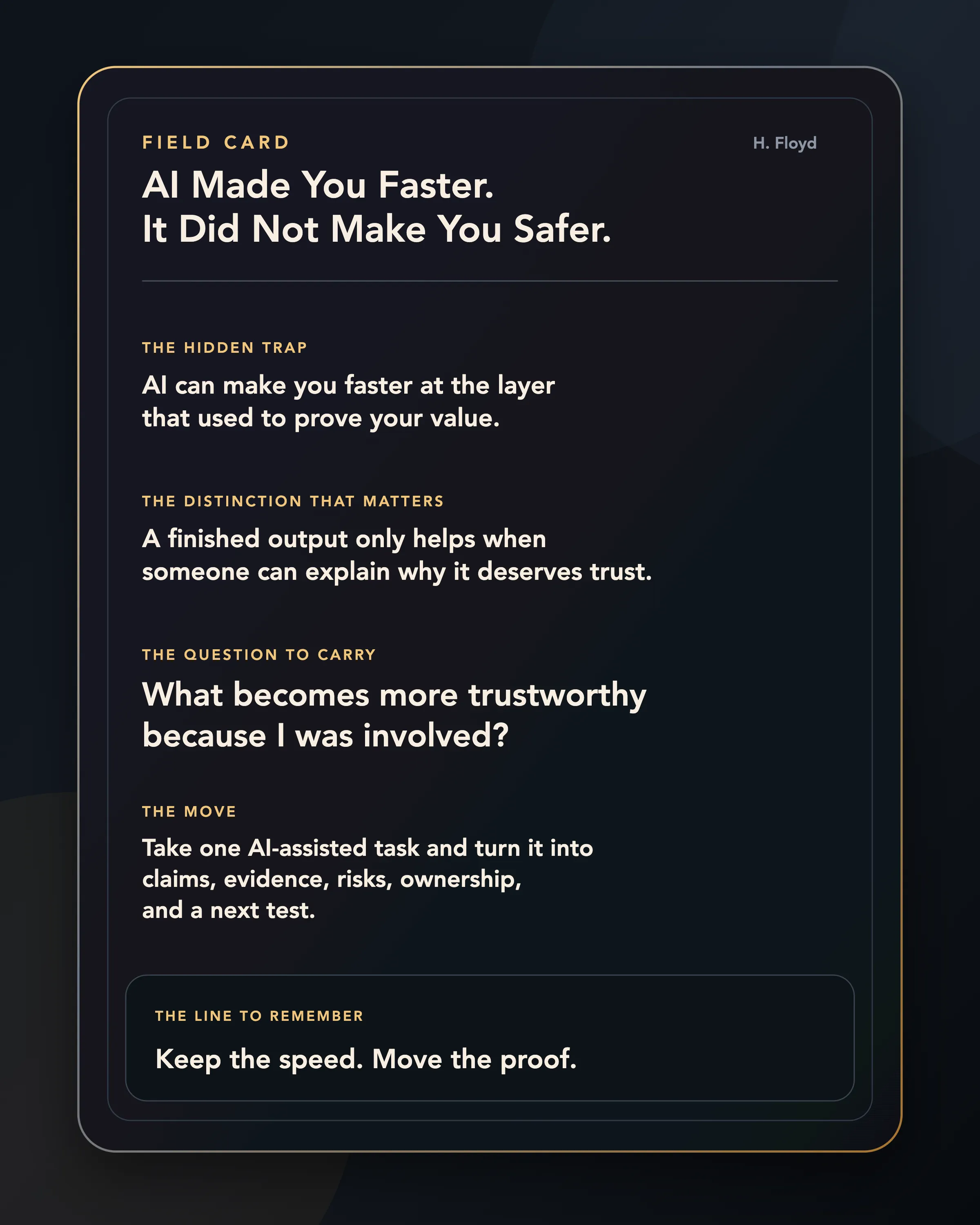

AI Made You Faster. It Did Not Make You Safer.

The strange thing about the AI productivity boom is that the people getting faster are not always getting more secure. Speed is becoming the surface. Proof is moving somewhere else.

The private feeling under the productivity story

The new anxiety does not always arrive as panic.

Sometimes it arrives at 11:17 on a Tuesday morning, just after the work goes strangely well.

You had blocked out the whole morning for the thing you were avoiding. A deck. A research memo. A customer summary. A bug you did not want to touch. A product plan that had been sitting in your notes for a week because the first draft felt too heavy to start.

Then the model does enough of it in twenty minutes.

It is not perfect. It is enough.

The page is no longer blank. The meeting transcript has structure. The argument has headings. The spreadsheet has an explanation. The first version of the plan exists. You can see the shape now.

For a moment, this feels like relief.

Then something quieter arrives underneath it.

If the thing that made me feel useful can appear this quickly, what exactly was scarce about me?

That is the feeling most AI productivity advice does not touch. It tells you to move faster, ship more, automate the boring parts, become a one-person team, learn agents, build workflows, and use the latest model before someone else does.

Some of that advice is useful.

But it skips the part people are actually carrying.

The obvious fear is that AI might take work away. The deeper fear is that AI is making the old proof of work weaker while everyone is still pretending productivity is the whole story.

You can feel more capable and less safe at the same time.

That is the paradox.

So this piece has to do more than diagnose the feeling.

By the end, you should have three things you can actually use:

-

a way to tell whether AI is making your work safer or just faster

-

a workflow for turning AI output into proof someone can trust

-

a prompt pattern you can copy whenever the task matters

The aim is not to make you feel better about AI.

It is to give you a better instrument for deciding where your value should move next.

The data now has a human shape

Anthropic recently published research based on roughly 81,000 Claude users. The headline is bigger than people using AI at work. Everyone knows that now.

The interesting part is the contradiction.

People reported meaningful productivity gains. Anthropic rated the average inferred productivity gain at 5.1 on its scale, corresponding to “substantially more productive.” Among respondents who described productivity effects, 48 percent talked about expanded scope, while 40 percent talked about speed.

But one fifth of respondents also voiced concern about economic displacement. People in the most AI-exposed jobs mentioned job threat roughly three times as often as people in the least exposed jobs. Early-career workers were more nervous than senior workers. And the people reporting the largest speedups were also more likely to worry about AI’s job impact.

That last point matters.

The speedup did not automatically produce confidence.

Sometimes the speedup was the reason confidence cracked.

Computerworld framed the same tension as the AI workplace paradox: higher productivity, higher anxiety. Developers, IT workers, market researchers, QA analysts, support specialists, and other exposed roles are not standing outside the technology, speculating about a distant future. They are using the tools. They are feeling the acceleration directly.

This is why the conversation feels stranger than an ordinary technology cycle.

The tool is useful, and its usefulness is part of the fear.

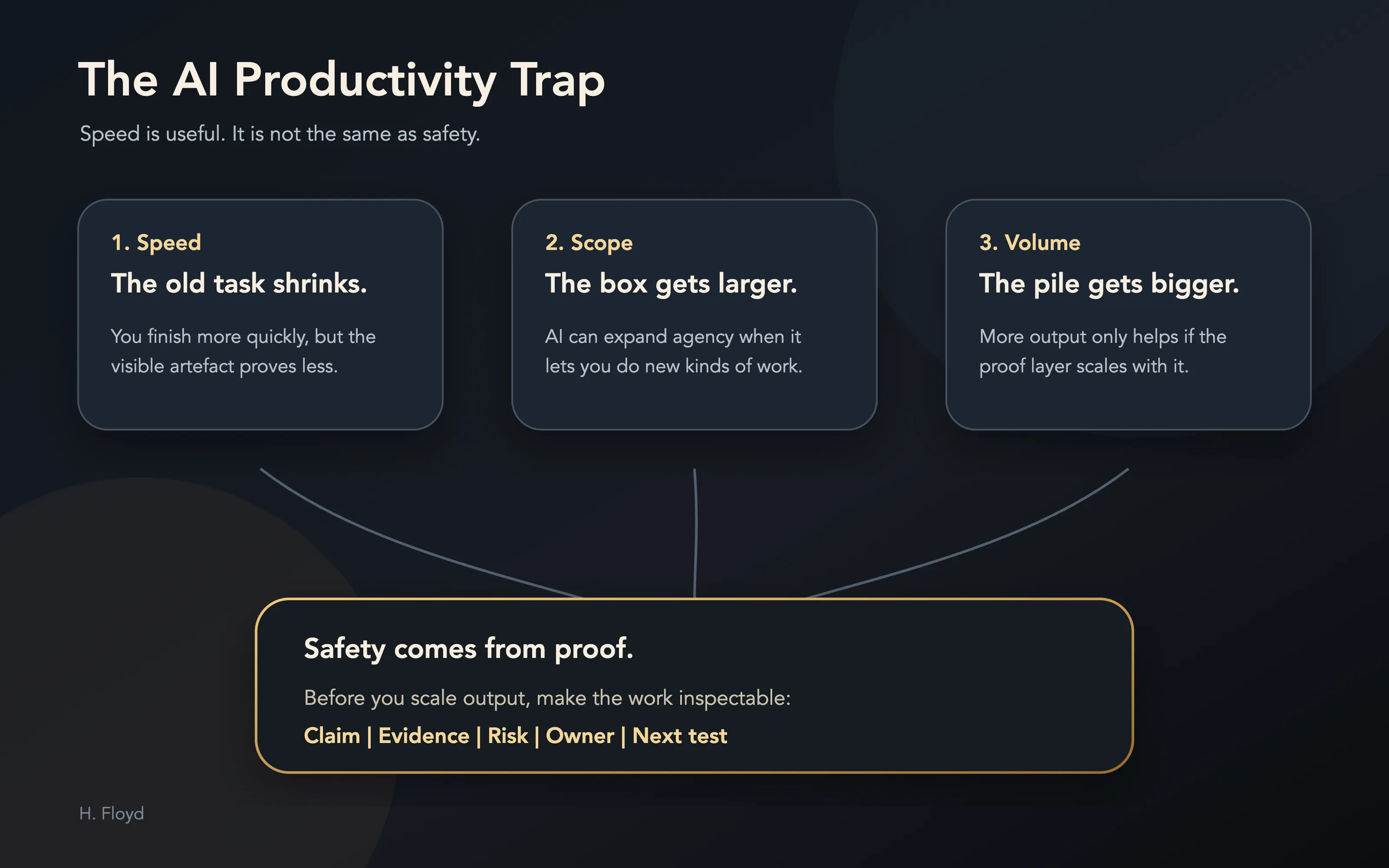

The trap is mistaking speed for safety

The optimistic version says productivity is protection.

If you use AI well, you become faster. If you become faster, you become more valuable. If you become more valuable, you become safer.

That chain sounds reasonable until everyone else gets access to the same speed.

Speed protects you only while speed is scarce.

Once speed becomes ambient, it stops being the proof. It becomes the baseline. The task that used to take a morning now takes twenty minutes. The analysis that used to look impressive now looks normal. The clean first draft no longer proves you wrestled with the problem. The slide no longer proves you saw the structure. The code no longer proves you understood the trade-off. The summary no longer proves you read the source carefully.

The visible artefact still matters.

It just means less than it used to.

This is the same pattern that appears whenever a layer gets cheap. The bottleneck migrates. When generation gets cheaper, verification gets more valuable. When output gets easier, judgement gets more important. When speed becomes common, the question moves from “Can you produce?” to “Can anyone trust what you produced?”

That is where the anxiety comes from.

People are competing with a new standard of evidence.

There are two kinds of AI productivity

The Anthropic data separates something important: scope and speed.

Speed means AI helps you do a task faster.

Scope means AI helps you do something you could not do before.

Those do not feel the same.

The useful question is whether the speedup moved you toward a stronger proof layer, or merely made the old surface cheaper.

If AI lets a founder build a prototype, a designer test more visual directions, a marketer analyse customer interviews, or a non-technical operator make a tool that used to require an engineer, that can feel like expanded agency. The person is moving inside a larger box.

But when AI mainly accelerates the work you were already paid to do, the feeling can turn unstable.

The task shrinks.

The expectation rises.

The proof weakens.

What used to count as a full day becomes half a day. What used to be impressive becomes table stakes. What used to be a training ground becomes automated away before it can teach anyone.

Computerworld quoted Sanchit Vir Gogia making a point every manager should sit with: faster generation can raise expectations on quality, and more output can feed decision pipelines that were already constrained. In some cases, the system becomes heavier, not lighter.

That is the part the productivity story misses.

AI does not enter a clean system. It enters existing approval chains, status games, hiring ladders, review rituals, political incentives, overloaded managers, insecure juniors, under-defined roles, and metrics that already confused movement with progress.

Acceleration inside a confused system does not automatically produce clarity.

Sometimes it produces faster confusion.

The entry-level problem is really a proof problem

One of the most important lines in the Computerworld piece is not about job loss directly.

It is about the path into the job.

Basic coding, documentation, routine analysis, QA, structured support, and first-pass research are often described as low-level work. That makes them sound expendable. But for a person becoming competent, low-level work is not just production. It is training.

The junior analyst builds the simple model before they learn which assumptions matter.

The support rep handles repetitive tickets before they understand the product’s real failure modes.

The marketer writes the bad first drafts before they develop taste.

The developer fixes small bugs before they can reason about architecture.

The researcher summarises sources before they can see what the sources are hiding.

If AI compresses that layer, the organisation may feel more efficient now and discover later that it has quietly damaged the apprenticeship path that produced judgement.

This is why “AI will automate the boring work” is too simple.

Some boring work is waste.

Some boring work is load-bearing.

You do not know which until you ask what capacity the friction was building.

If the friction was only moving information from one box to another, automate it.

If the friction was teaching the person how the system fails, be careful.

You may be removing the part of the work that turned exposure into judgement.

What still proves you are valuable?

When output gets cheap, value does not disappear.

It moves.

The old proof was often attached to the surface: the memo, the deck, the clean code, the finished research, the generated strategy, the polished artefact.

The new proof has to move closer to the system around the artefact.

That means your safest work is no longer just the part that produces the answer. It is the part that makes the answer worth trusting.

There are five places to look.

Problem choice: did you aim the tool at the right question?

Source judgement: did you know what evidence deserved belief?

Rejection: did you know which plausible output to delete?

Ownership: can you explain and stand behind the final version?

Learning loop: does the work make the next decision better, or only create the next artefact faster?

This is the migration map.

If AI makes the visible artefact easier to produce, your value has to move into the judgement system that decides what gets produced, what gets trusted, what gets rejected, and what gets shipped.

That is the article in one sentence:

Stop trying to be the fastest producer of the surface. Become the person who can make the surface trustworthy.

The work is not gone. The work has moved.

This is the mistake in both the panic and the hype.

The panic says AI will do the work.

The hype says AI will free us from the work.

Both assume the work is the visible task.

But in most serious knowledge work, the task was never the whole job. The task was the part of the job that could be named.

Write the memo.

Fix the bug.

Summarise the meeting.

Build the model.

Draft the plan.

Make the deck.

Compare the vendors.

Ship the feature.

Underneath those tasks was the harder layer: knowing what mattered, noticing what was missing, making trade-offs, protecting context, earning trust, sequencing effort, resisting bad incentives, and deciding what you were willing to stand behind.

AI attacks the named layer first.

That does not make the unnamed layer less important.

It makes the unnamed layer harder to avoid.

The person who only became faster at producing surfaces may feel less safe because the market can now buy more surfaces. The person who becomes better at deciding which surfaces deserve trust is moving toward the new bottleneck.

That is the difference.

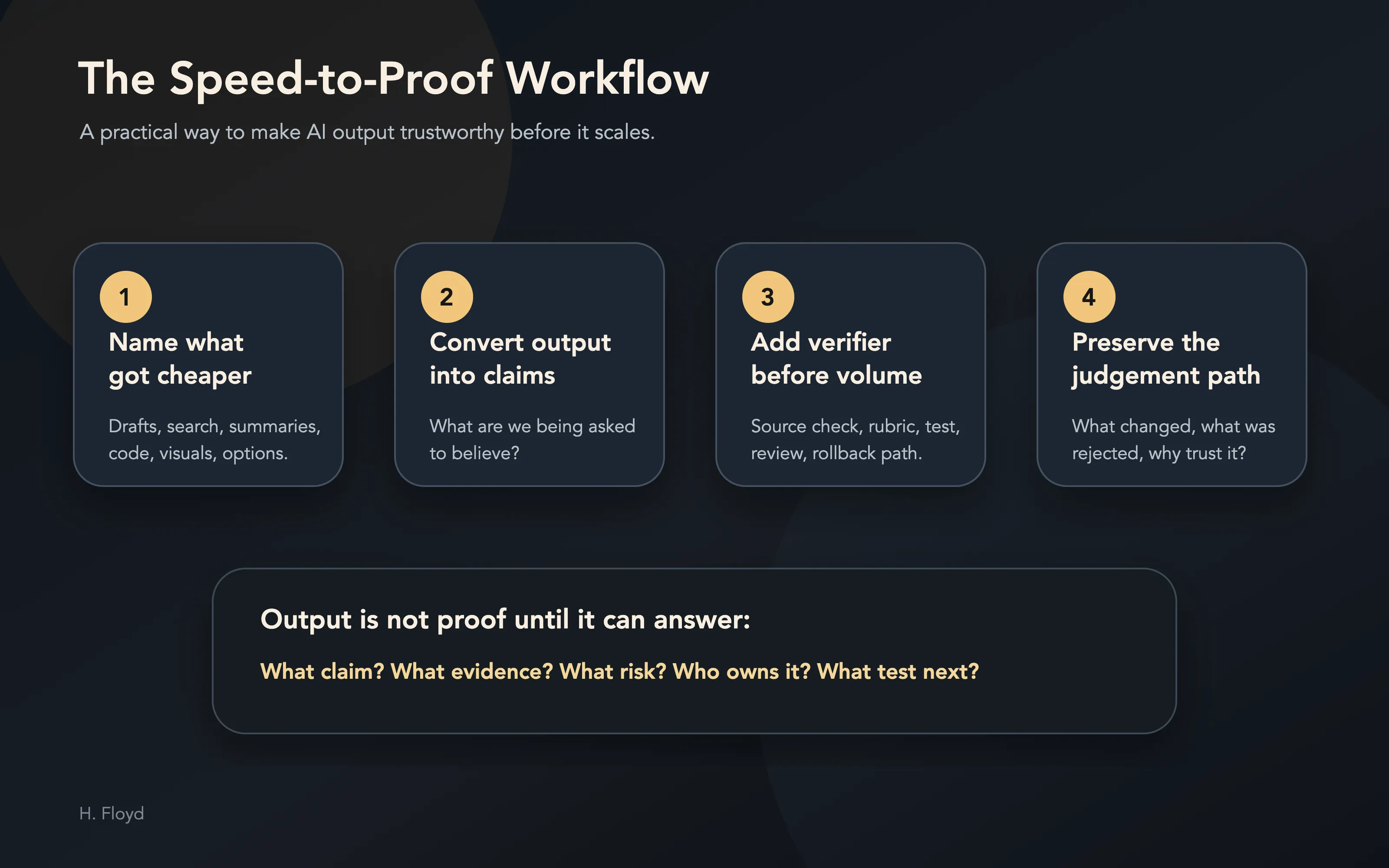

The speed-to-proof workflow

The practical move is simple.

If AI has made a task faster, do not stop at the speedup. Run the output through four layers.

This is the part to actually use. Open a real AI-assisted task from this week and walk it through the sequence below. A meeting summary, product plan, hiring screen, research memo, code change, customer email, investment note, content draft, or strategy doc all work.

A saveable workflow for turning AI output into something another person can trust.

1. Name what got cheaper

Start by identifying the layer AI compressed.

Did it make the first draft cheaper?

Did it make search cheaper?

Did it make summarising cheaper?

Did it make visual exploration cheaper?

Did it make coding the obvious path cheaper?

This matters because the cheapened layer is no longer where you should look for safety. If AI made first drafts cheap, a first draft is not proof. If AI made summaries cheap, a summary is not proof. If AI made prototypes cheap, a prototype is not proof.

The first move is to stop treating the compressed layer as the evidence layer.

2. Convert the output into claims

AI output is usually shaped like an artefact: memo, plan, draft, slide, answer, summary, code.

Proof starts when you break that artefact into claims.

Use this format:

Claim:

What is this output asking us to believe?

Evidence:

What source, observation, customer fact, test, or prior decision supports it?

Risk:

Where could this be wrong, brittle, misleading, or overconfident?

Owner:

Who is willing to stand behind this after the model disappears?

Next test:

What would we check before acting on it?That small format changes the work.

The output stops being a polished surface and becomes an object someone can inspect.

This is why the workflow matters. Most AI tools make artefacts easier to create. This makes artefacts easier to trust.

3. Add a verifier before you add volume

Most people respond to AI speed by increasing output.

More drafts. More options. More experiments. More content. More code. More analysis.

That is tempting, but it is often the wrong order.

If generation got faster, the next investment should be verification.

Before increasing volume, decide what would make the output trustworthy:

-

source check

-

customer check

-

counterexample search

-

second-person review

-

test suite

-

evaluation rubric

-

decision log

-

rollback path

The rule is simple:

Do not scale the output until you have scaled the proof.

Otherwise AI has not made you safer. It has made your uncertainty more productive.

4. Build memory around the decision

The final layer is memory.

Not memory in the vague “save your prompts” sense. Decision memory.

For any meaningful AI-assisted work, preserve four things:

-

what the model produced

-

what you changed

-

what you rejected

-

why the final version deserved trust

This is where a person becomes harder to replace.

Their advantage is the visible judgement path, not the typing.

A worked example: the meeting summary that can hurt you

Take the most ordinary possible example: a meeting summary.

This is exactly the kind of work people are happy to hand to AI because it feels low-risk. The meeting happened. The transcript exists. The model can summarise it. Everyone gets their time back.

But a summary becomes consequential the moment people act on it.

Imagine a product team has a customer call about churn. The AI summary says:

Customers are leaving because onboarding is confusing. Next step: simplify onboarding emails and create a better help centre flow.

That sounds useful. It might even be right.

But if you ship from that summary, you have skipped the proof layer.

Run the workflow.

What got cheaper?

The transcript-to-summary step. The old proof was: “someone listened carefully and understood the customer.” That proof is now weaker because a plausible summary can arrive without careful listening.

What claims are being made?

Claim one: customers are leaving because onboarding is confusing.

Claim two: email and help-centre changes are the right response.

What evidence supports them?

Maybe three customers mentioned confusion. But did they churn because of it, or did they mention it after already deciding the product was not valuable enough? Did power users say the same thing? Did support tickets show the same pattern? Did activation data show drop-off at onboarding, or later when the product failed to become a habit?

What is the risk?

The team might fix the easiest visible complaint while missing the real retention problem.

Who owns the judgement?

Someone has to say: “I believe onboarding is the bottleneck” or “I think onboarding is only the polite surface reason.”

What is the next test?

Pull the last ten churned accounts. Compare transcript complaints with usage data. Look for whether confusion appears before disengagement or after it. Then decide whether onboarding is the cause, a symptom, or a convenient story.

Now the AI summary has become useful.

The summary became useful because it was turned into claims, evidence, risks, ownership, and a next test.

That is the difference between an AI output and a decision-grade artefact.

Two prompts: one makes output, one builds proof

Most AI prompting still aims at surface production.

That is fine for low-stakes work. It is weak for anything that affects customers, strategy, hiring, money, reputation, or trust.

Compare the difference.

Surface prompt:

Summarise this meeting transcript and give me the key action items.Proof prompt:

Turn this meeting transcript into a decision-grade summary.

Separate:

1. Decisions actually made

2. Open questions still unresolved

3. Claims that need evidence

4. Risks or assumptions people glossed over

5. Actions, owners, and deadlines

6. What should be verified before anyone acts on this

If the transcript does not support a conclusion, say so.The first prompt makes a cleaner artefact.

The second prompt creates a trust surface.

Another example:

Surface prompt:

Create a launch plan for this product.Proof prompt:

Create a launch plan for this product, but organise it as a proof system.

For each recommendation, include:

- the customer belief it depends on

- the evidence we currently have

- the weakest assumption

- the first cheap test

- the failure signal that would make us stop

- the person who owns the decision

Do not optimise for a confident plan. Optimise for a plan we can safely learn from.This is the shift.

Do not ask AI only to produce the thing.

Ask it to expose what would make the thing trustworthy.

That one change is often enough to separate useful AI work from impressive-looking noise.

What managers should learn

If you manage people, do not treat AI productivity as a simple capacity increase.

The lazy version is to say: the same person can now do twice as much, so expectations should double.

That may work for a quarter.

It may also destroy the slower system that produces competence.

If AI removes junior tasks, you need a new apprenticeship path. If AI increases output, you need a stronger verification layer. If AI expands scope, you need clearer ownership. If AI makes everyone faster, you need better judgement about which work deserves speed.

The management question is not:

How much more can we get?

The better question is:

What proof of competence did the old work produce, and what replaces it now?

That question turns into three concrete management moves.

First, protect an apprenticeship layer.

If AI removes junior tasks, deliberately replace the learning function those tasks used to serve. A junior person still needs reps in debugging, source judgement, customer contact, messy data, ambiguous trade-offs, and the slow discovery of what “good” looks like.

Do not let “the model can do that now” become “nobody learns how the system works.”

Second, create a verification budget.

For every AI-assisted workflow, decide how much time belongs to output and how much belongs to proof. A rough starting rule:

If the work affects a real decision, spend at least 30 percent of the saved time on verification.

If a task used to take three hours and AI makes it take one, do not automatically fill the extra two hours with more tasks. Put some of that time into checking assumptions, testing edge cases, improving the rubric, or teaching someone how the decision is made.

Third, require an ownership receipt.

Before important AI-assisted work is shipped, ask for a short note:

What did AI produce?

What did the human change?

What was rejected?

What evidence supports the final version?

What would make us revise this?

Who owns the outcome?This is not bureaucracy.

It is how you prevent productivity from quietly destroying accountability.

Most organisations will not ask that early enough.

They will celebrate acceleration, then wonder why trust, training, review quality, and decision clarity did not improve at the same rate.

That is how productivity becomes a trap.

What individuals should learn

If you are using AI and feeling the strange mix of power and unease, do not dismiss it as irrational.

The feeling is information.

It may be telling you that your work has been over-identified with a surface AI is now compressing.

That does not mean you are obsolete.

It means your old proof is weakening.

Move your effort upward.

Getting faster at drafting helps. Deciding what deserves to be drafted matters more.

Generating more options helps. Deleting the wrong ones matters more.

Summarising faster helps. Knowing which source deserves belief matters more.

Automating the workflow helps. Owning the exception matters more.

Producing the answer helps. Explaining why the answer should be trusted matters more.

The safest person in the AI workplace will not be the person with the most outputs.

It will be the person whose judgement becomes more visible as output gets cheaper.

The practical move is to keep a proof log for one week.

Nothing elaborate. Just five columns:

-

Task

-

What AI made faster

-

What still required judgement

-

What proof I added

-

What I learned

At the end of the week, look for the pattern.

If most of the log is “AI made me faster” and the proof column is empty, you are becoming more efficient at the surface.

If the proof column gets stronger, you are building the layer that travels.

That is the difference between using AI as an output multiplier and using it as a judgement amplifier.

If you want the shortest version, use this:

Before AI: What was hard?

After AI: What became easy?

Risk: What old proof got weaker?

Move: What proof do I need to add now?That is the whole personal strategy. Keep the speed. Move the proof.

The real promise

AI made many people faster.

That is real.

It also made many people less sure what their speed proves.

That is real too.

The next few years will be full of advice telling people to use AI more, ship more, automate more, and become more productive. Some of that advice will help. Much of it will leave the deeper wound untouched.

Because the central question has changed.

What becomes more trustworthy because I was involved?

That is the standard.

The old tests are too small: whether you touched every word, refused the tool, or produced more than the person next to you.

The better test is whether your involvement made the work more true, more useful, more accountable, more connected to reality, and harder to misunderstand.

That is the layer to build now.

Keep the speed.

Move the proof.

The person who wins in the AI workplace will not be the one who looks busiest after the surface gets cheap.

It will be the one whose judgement is easiest to trust.

The Field Card

If a single argument here changed what you were about to trust, the highest-leverage move is to subscribe on Substack. One piece a week, no filler.